By Prashant Kokane

In autos geared up with Superior Driver Help Methods (ADAS) or in Autonomous Driving (AD) autos, the human driver depends on electrical/digital techniques for management of the car. The ADAS/AD techniques carry out important actions of maneuvering / driving the car in site visitors, on varied sorts of roads (freeway/metropolis) and in numerous environmental situations (day/evening/rain/fog) and many others. Thus, it is vitally necessary to make these ADAS/AD techniques strong in order that the person can rely on them.

Man-made techniques will be designed to be dependable for the supposed performance however can’t be fault-free. There will be deterioration of the mechatronic, electrical, or digital system parts as a consequence of corruption of the data flowing inside or from exterior the car techniques. For instance, a sensor malfunction might present incorrect information, or a cyber-attack may intervene and trigger a failure. Thus, engineers should discover potentialities by which a system can fail, implement mechanisms to detect the failures and carry out in-time acceptable actions to take the system to a secure state.

A easy instance of this may very well be the failure/malfunction of a digicam sensor in an ADAS car. As quickly because the digicam malfunction is detected, the system should inform the driving force and ask him/her to take management of the car and shut down the ADAS system.

Detection and mitigation of all doable failures within the system should be built-in with useful design to make the system secure and keep away from possible hazards or accidents.

A scientific technique to analyse and determine completely different root causes by which an Electrical/Digital (E/E) system in a car can fail is termed as Purposeful Security evaluation of the system. Within the automotive area, ISO26262 requirements present tips to make the system functionally secure towards malfunctions.

Here’s a have a look at how Purposeful Security – ISO26262 implementation makes a system secure towards malfunctions.

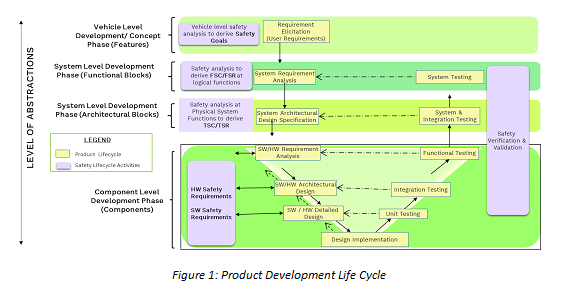

A typical automotive product improvement life cycle begins with vehicle-level idea improvement, system design, {hardware} and software program design, and implementation. It ends with verification and validation of every section of improvement.

At every stage, one should perform security evaluation actions to determine loopholes or dependencies which will result in a hazardous scenario or violate a security requirement derived within the earlier section of the event cycle.

Setting security objectives

Whereas creating the ADAS function ‘Freeway Help (HWA),’ on the idea stage of improvement, the HWA system is analysed on the idea of vehicle-level operate failures like lack of car deceleration or braking operate. These vehicle-level operate failures, together with completely different driving situations, can result in a number of hazardous conditions of various diploma of severity.

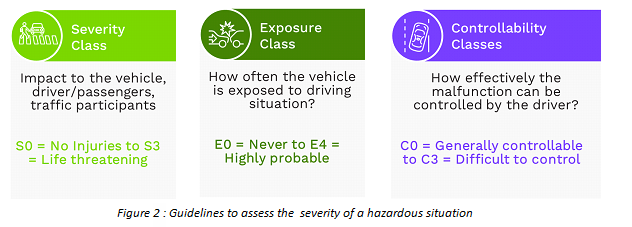

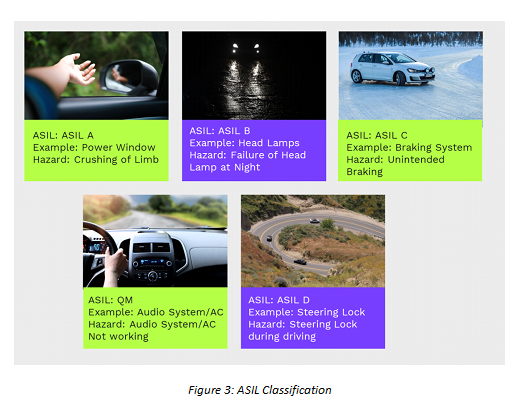

The ISO 26262 normal supplies a tenet to evaluate severity (Severity Lessons) of all conditions and supplies a security score system known as Automotive Security Integrity Stage (ASIL). Engineers should use a mixture of Severity Lessons and ASIL scores talked about in Fig 2 and Fig 3 beneath, to develop acceptable ‘Security Objectives.’ For example, within the above-mentioned instance, ‘lack of car deceleration for freeway help’, the protection aim can be to keep away from ‘unintended deceleration.’

Figuring out doable malfunctions

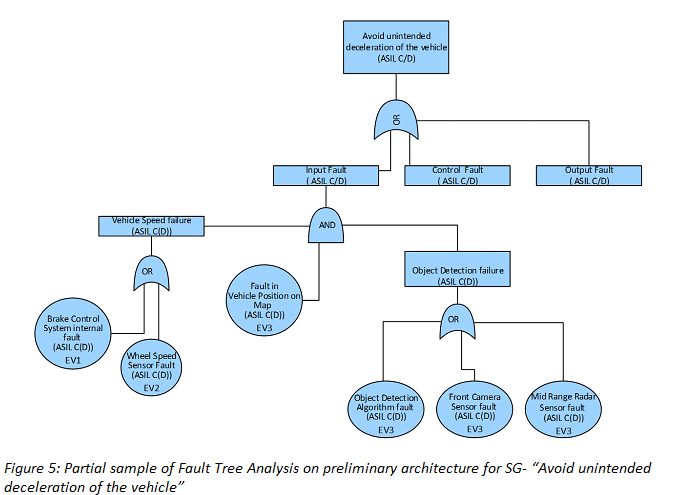

On the subsequent stage of the evaluation, completely different potentialities of malfunctions which will trigger the unintended deceleration needs to be checked.

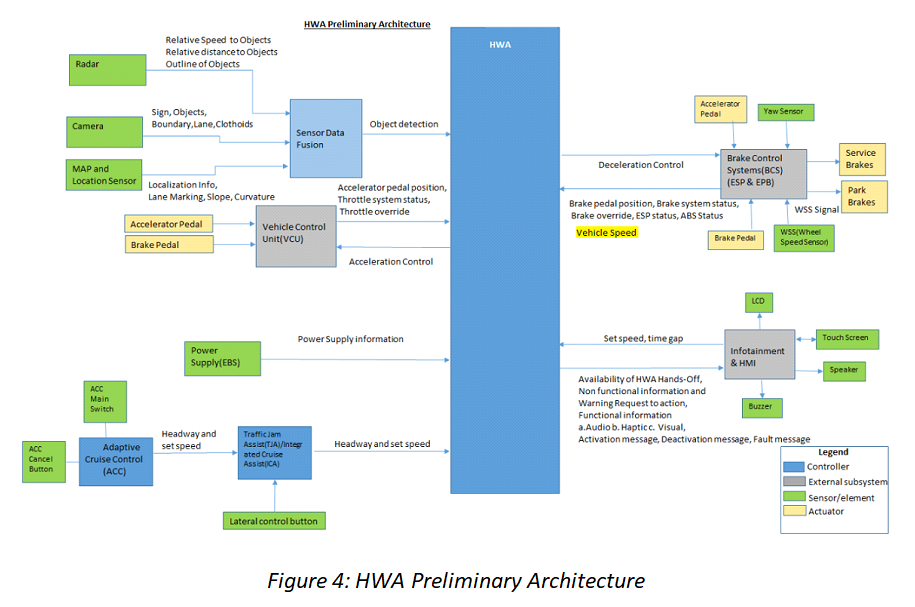

Primarily based on preliminary structure (Determine 4), a Fault Tree Evaluation -FTA (Determine 5) is carried out to determine doable root causes contributing to violation of the protection aim i.e., unintended deceleration.

Thus, primarily based on the derived security objectives, engineers analyse the preliminary structure to determine all dependencies that may set off a security aim violation and derive ‘useful security necessities’ and ‘useful security idea.’

Two examples of Purposeful Security Necessities (FSR) are as follows:

·FSR – 1: Wheel Pace Sensor Fault should be detected,

·FSR- 2: HWA System should notify driver to take management of the car on prevalence of Wheel Pace Sensor Fault in a delegated time (1 Sec)

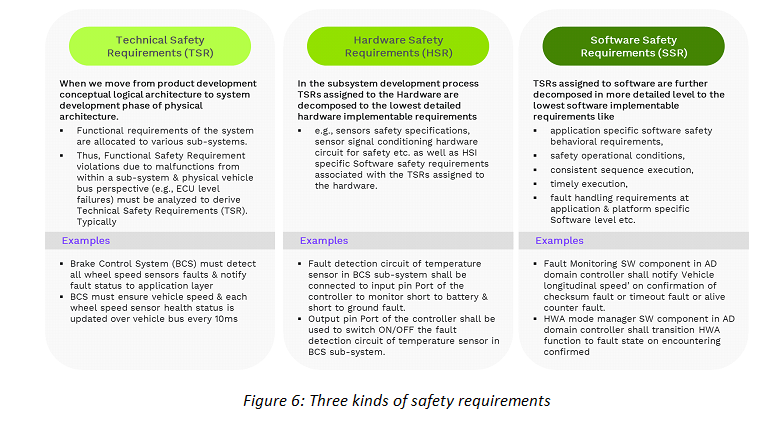

Constructing security necessities

Security necessities are necessities addressing what the system should carry out to detect a fault, and what actions the system ought to carry out on fault detection, in order that the next hazard will be averted in time.

Security necessities be sure that on detection of a fault, the system transitions to a secure state earlier than the hazard happens. All these security necessities from fault detection to fault dealing with to well timed transitioning to a secure state and required updates within the present idea/structure/design varieties a security idea.

Therefore, at every degree of product improvement maturity, we determine dependencies violating the protection aim or overview former improvement stage security necessities to create ranges of security necessities and security ideas.

Like Fault Tree Evaluation (FTA), we additionally use different security evaluation methodologies like Failure Mode and Results Evaluation (FMEA), and Dependent Failure Evaluation (DFA) for extra detailed evaluation at subsequent product improvement levels.

The three sorts of security necessities are showcased in Determine 6 as follows:

The Purposeful Security Customary ISO 26262 additionally supplies extra tips for parts being developed out of context and Fail-Protected Operation Idea.

Security aspect out of context (SEooC)

Organisations that concentrate on creating software program parts with none system context, face challenges. For instance, whereas creating software program parts like AUTOSAR/ Middleware or Working techniques, the software program improvement staff might not know by which ADAS/AD utility or system their software program part can be used. For such software program improvement conditions, some system context assumptions should be made throughout improvement to adjust to security requirements. The ISO26262 Purposeful Security normal supplies tips for such an strategy below Security Component out of Context (SEooC).

Fail-safe operational idea

For ADAS autos as much as Stage 3, there’s a human driver current and as soon as a failure is detected, the human driver will be requested to take management of the car and cease it safely.

However increased within the degree of autonomy, i.e., Stage 3+ or Stage 4/5, there could also be no human driver to take management of the car in case of a failure. In such situations, the system should be able to detecting and working the car with a minimal danger movement and cease the car at a secure place.

These elements of security are analysed and designed utilizing the fail-safe operational idea. On this course of, one should carry out dependent failure evaluation, freedom from interference evaluation and develop a redundant system to make sure operation of the car even in a important malfunction scenario, i.e. the system should have redundancy by way of a secondary digicam or using different sensors (Radar/Lidar) to carry the car to a secure state (park to the aspect).

In abstract, at each improvement stage from idea, system, {hardware} and software program improvement, respective stage verification and validation of the protection necessities and its implementation is carried out. It ensures that improvement at every stage complies with security necessities and processes, serving to develop a secure, strong product.

(Disclaimer: Prashant Kokane is Senior Resolution Architect at KPIT Applied sciences. Views are private.)

Additionally Learn: